如何使用python爬取知乎热榜Top50数据

1、导入第三方库

import urllib.request,urllib.error #请求网页 from bs4 import BeautifulSoup # 解析数据 import sqlite3 # 导入数据库 import re # 正则表达式 import time # 获取当前时间

2、程序的主函数

def main():

# 声明爬取网页

baseurl = "https://www.zhihu.com/hot"

# 爬取网页

datalist = getData(baseurl)

#保存数据

dbname = time.strftime("%Y-%m-%d", time.localtime()) #

dbpath = "zhihuTop50 " + dbname

saveData(datalist,dbpath)

3、正则表达式匹配数据

#正则表达式 findlink = re.compile(r'<a class="css-hi1lih" href="(.*?)" rel="external nofollow" rel="external nofollow" ') #问题链接 findid = re.compile(r'<div class="css-blkmyu">(.*?)</div>') #问题排名 findtitle = re.compile(r'<h1 class="css-3yucnr">(.*?)</h1>') #问题标题 findintroduce = re.compile(r'<div class="css-1o6sw4j">(.*?)</div>') #简要介绍 findscore = re.compile(r'<div class="css-1iqwfle">(.*?)</div>') #热门评分 findimg = re.compile(r'<img class="css-uw6cz9" src="(.*?)"/>') #文章配图

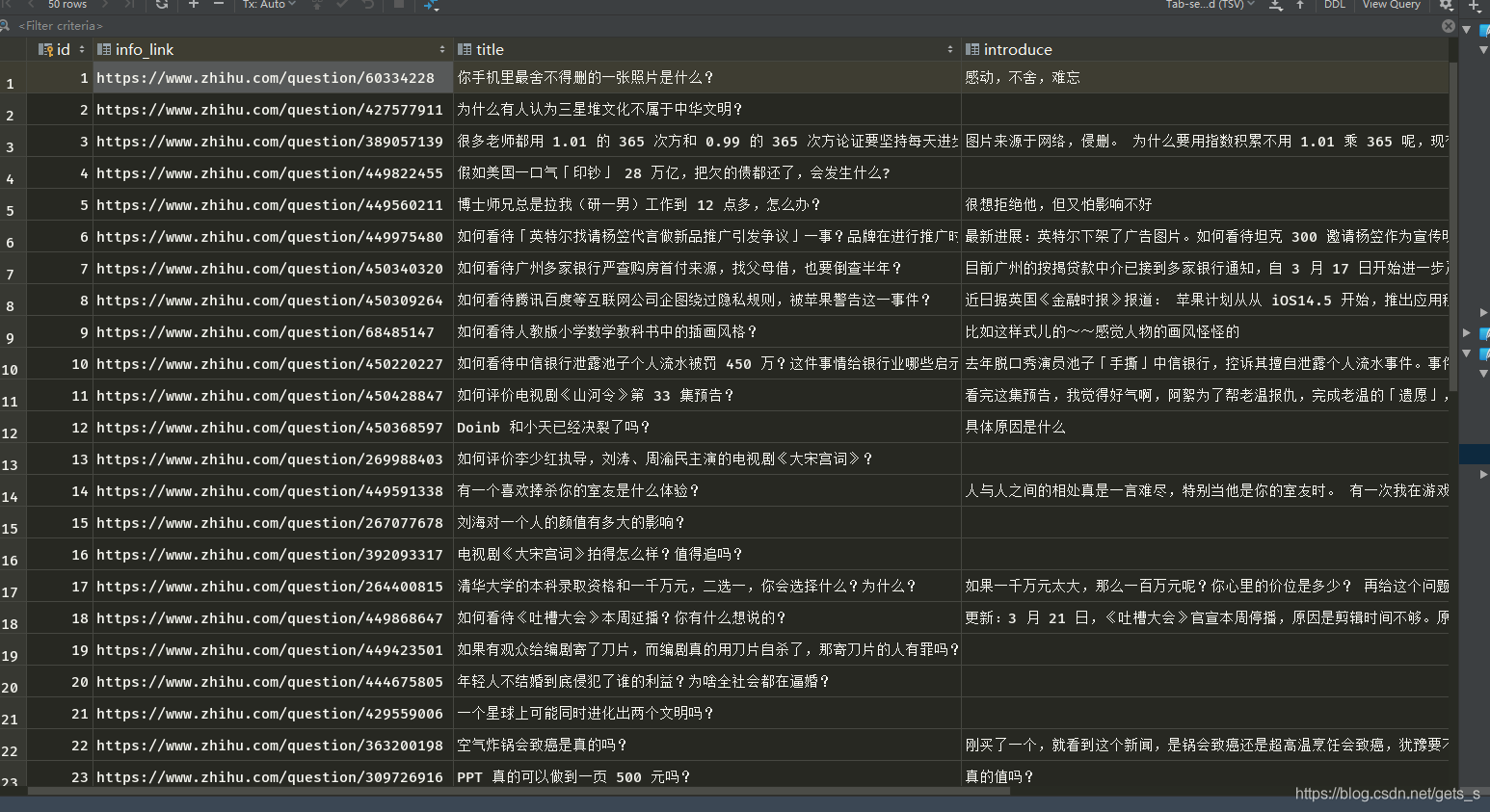

4、程序运行结果

5、程序源代码

import urllib.request,urllib.error

from bs4 import BeautifulSoup

import sqlite3

import re

import time

def main():

# 声明爬取网页

baseurl = "https://www.zhihu.com/hot"

# 爬取网页

datalist = getData(baseurl)

#保存数据

dbname = time.strftime("%Y-%m-%d", time.localtime())

dbpath = "zhihuTop50 " + dbname

saveData(datalist,dbpath)

print()

#正则表达式

findlink = re.compile(r'<a class="css-hi1lih" href="(.*?)" rel="external nofollow" rel="external nofollow" ') #问题链接

findid = re.compile(r'<div class="css-blkmyu">(.*?)</div>') #问题排名

findtitle = re.compile(r'<h1 class="css-3yucnr">(.*?)</h1>') #问题标题

findintroduce = re.compile(r'<div class="css-1o6sw4j">(.*?)</div>') #简要介绍

findscore = re.compile(r'<div class="css-1iqwfle">(.*?)</div>') #热门评分

findimg = re.compile(r'<img class="css-uw6cz9" src="(.*?)"/>') #文章配图

def getData(baseurl):

datalist = []

html = askURL(baseurl)

# print(html)

soup = BeautifulSoup(html,'html.parser')

for item in soup.find_all('a',class_="css-hi1lih"):

# print(item)

data = []

item = str(item)

Id = re.findall(findid,item)

if(len(Id) == 0):

Id = re.findall(r'<div class="css-mm8qdi">(.*?)</div>',item)[0]

else: Id = Id[0]

data.append(Id)

# print(Id)

Link = re.findall(findlink,item)[0]

data.append(Link)

# print(Link)

Title = re.findall(findtitle,item)[0]

data.append(Title)

# print(Title)

Introduce = re.findall(findintroduce,item)

if(len(Introduce) == 0):

Introduce = " "

else:Introduce = Introduce[0]

data.append(Introduce)

# print(Introduce)

Score = re.findall(findscore,item)[0]

data.append(Score)

# print(Score)

Img = re.findall(findimg,item)

if (len(Img) == 0):

Img = " "

else: Img = Img[0]

data.append(Img)

# print(Img)

datalist.append(data)

return datalist

def askURL(baseurl):

# 设置请求头

head = {

# "User-Agent": "Mozilla/5.0 (Windows NT 10.0;Win64;x64) AppleWebKit/537.36(KHTML, likeGecko) Chrome/80.0.3987.163Safari/537.36"

"User-Agent": "Mozilla / 5.0(iPhone;CPUiPhoneOS13_2_3likeMacOSX) AppleWebKit / 605.1.15(KHTML, likeGecko) Version / 13.0.3Mobile / 15E148Safari / 604.1"

}

request = urllib.request.Request(baseurl, headers=head)

html = ""

try:

response = urllib.request.urlopen(request)

html = response.read().decode("utf-8")

# print(html)

except urllib.error.URLError as e:

if hasattr(e, "code"):

print(e.code)

if hasattr(e, "reason"):

print(e.reason)

return html

print()

def saveData(datalist,dbpath):

init_db(dbpath)

conn = sqlite3.connect(dbpath)

cur = conn.cursor()

for data in datalist:

sql = '''

insert into Top50(

id,info_link,title,introduce,score,img)

values("%s","%s","%s","%s","%s","%s")'''%(data[0],data[1],data[2],data[3],data[4],data[5])

print(sql)

cur.execute(sql)

conn.commit()

cur.close()

conn.close()

def init_db(dbpath):

sql = '''

create table Top50

(

id integer primary key autoincrement,

info_link text,

title text,

introduce text,

score text,

img text

)

'''

conn = sqlite3.connect(dbpath)

cursor = conn.cursor()

cursor.execute(sql)

conn.commit()

conn.close()

if __name__ =="__main__":

main()

关于如何使用python爬取知乎热榜Top50数据的文章就介绍至此,更多相关python 爬取知乎内容请搜索编程宝库以前的文章,希望大家多多支持编程宝库!

如何使用python爬取B站排行榜Top100的视频数据:记得收藏呀!!! 1、第三方库导入from bs4 import BeautifulSoup # 解析网页import re # 正则表达式,进行文字匹配import ur ...